Ask any career advisor what students need more of, and mock interviews will be near the top of the list. Ask those same advisors how many mock interviews they can realistically facilitate each semester, and you will hear a number that is a small fraction of their student population. We built something to close that gap.

Today we are launching real-time AI mock interviews on Prentus, currently in beta. Students can practice interviewing with a voice-based AI agent that asks role-specific questions, responds naturally to their answers, and delivers detailed feedback the moment the session ends.

Why Traditional Mock Interviews Do Not Scale

The standard mock interview model depends on a scarce resource: a trained person sitting across from a student for 30 to 60 minutes. That person might be a career advisor, an alumni volunteer, or a peer counselor. Each of those options has real constraints.

Career advisors are already stretched thin—a challenge that NACE has documented across institutions nationwide. Advisors are already stretched thin across resume reviews, employer relations, and outcome reporting. Alumni volunteers are generous but inconsistent, and coordinating their schedules with students creates logistical overhead. Peer-to-peer practice is helpful but lacks the expertise to deliver actionable feedback.

The result is that most students go through their entire job search without ever doing a practice interview. They walk into their first real interview cold, and their performance reflects it.

How Voice-Based AI Interviewing Works

A student opens Prentus, selects the type of interview they want to practice (behavioral, technical, industry-specific), and optionally pastes a job description. The AI agent generates a question set tailored to that role, then begins the interview as a live voice conversation.

This is not a text prompt where students type answers at their own pace. It is a spoken conversation with natural pacing, follow-up questions, and the kind of pressure that mimics a real interview. The AI listens, adapts its follow-ups based on the student's responses, and keeps the conversation moving.

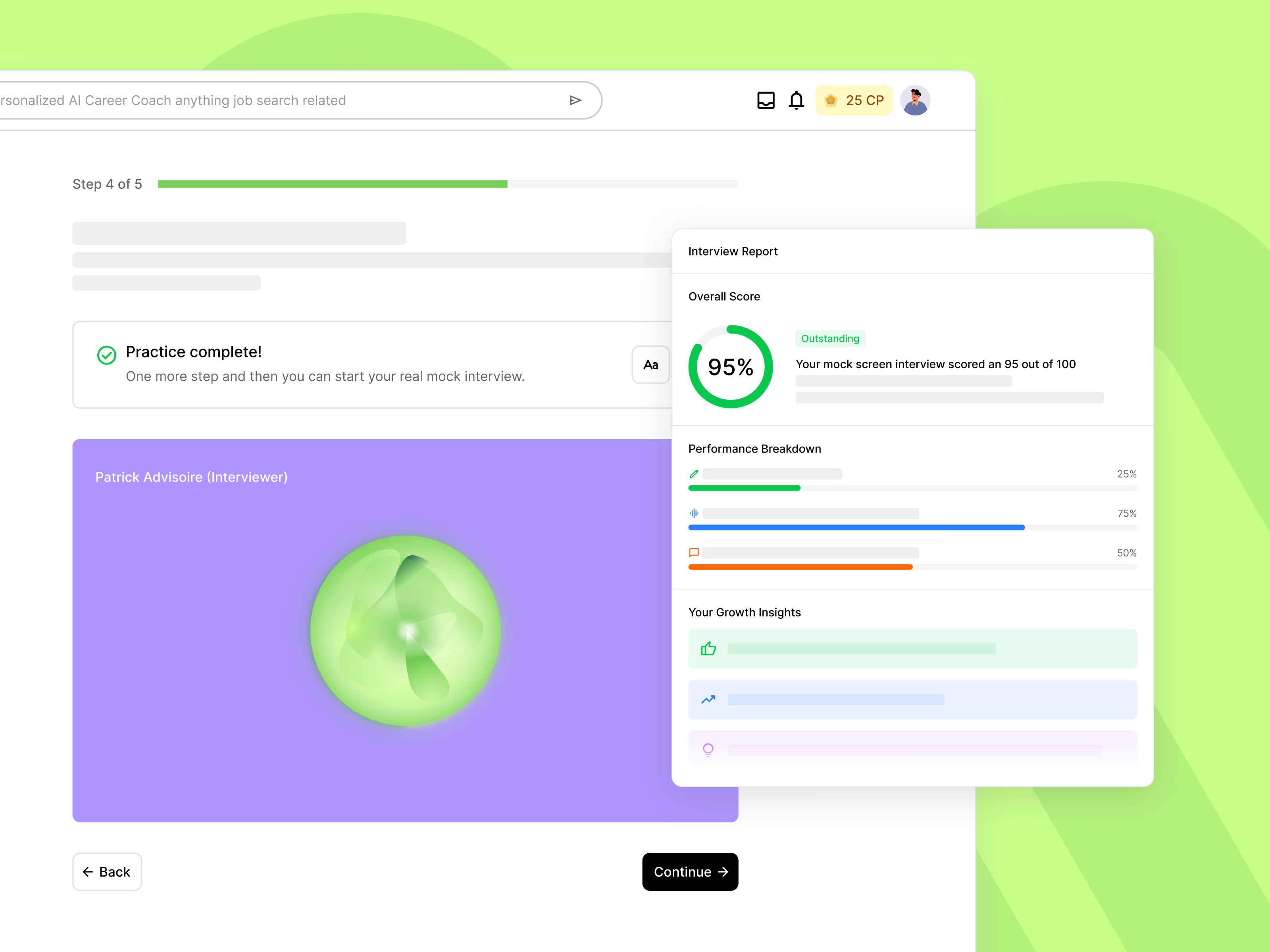

When the session ends, the student receives a structured feedback report covering:

- Content quality. Did the student answer the question directly? Were examples specific and relevant?

- Structure and clarity. Did the response follow a logical framework? Was it concise or did it meander?

- Communication patterns. Filler word frequency, pacing, and confidence indicators based on speech analysis.

- Actionable next steps. Specific suggestions for how to strengthen each answer if the student practices again.

What Makes This Different

There are existing interview prep tools on the market. Most fall into two categories: pre-recorded video responses that students film and submit for asynchronous review, or text-based Q&A where students read a question and type an answer. Both have their place, but neither replicates what actually happens in an interview.

Interviews are live conversations. The pressure comes from thinking on your feet while someone is listening. The skill being tested is not writing ability. It is verbal communication under a mild amount of stress. That is why voice matters. Students need to practice the actual thing they will be doing, not a proxy for it.

The best way to get better at interviews is to do more interviews. AI makes it possible for students to practice as many times as they need, on their own schedule, without burning through limited advisor availability.

The other advantage is consistency and availability. A student preparing for an interview on Sunday night at 11pm can run a full practice session. A student who wants to do five practice rounds before a Tuesday afternoon interview can do exactly that. There is no scheduling friction and no waiting.

Early Beta Feedback

We have been testing AI mock interviews with a group of institutional partners over the past several weeks. The feedback has been encouraging. Students report that the voice format feels significantly more realistic than text-based alternatives. Several beta testers mentioned that the follow-up questions were the most valuable part, because they forced deeper thinking rather than allowing rehearsed answers.

Career advisors in the beta noted that students came to in-person coaching sessions better prepared after using the AI mock interviews. Rather than replacing the human interaction, the AI practice sessions gave students a foundation so their time with an advisor could focus on higher-level strategy.

What Comes Next

This is a beta launch, and we are actively iterating based on feedback. Our roadmap includes industry-specific interview banks (healthcare, finance, tech, education), integration with job applications so students can practice for specific roles they have applied to, and analytics dashboards that give career services teams. EDUCAUSE has documented growing demand for AI-powered student support tools across higher education visibility into how their students are preparing.

If your institution wants to give students unlimited mock interview practice without adding to your team's workload, let's set up a conversation.